On two occasions I have been asked, “Pray, Mr. Babbage, if you put into the machine wrong figures, will the right answers come out?” I am not able rightly to apprehend the kind of confusion of ideas that could provoke such a question.

—Charles Babbage, Passages from the Life of a Philosopher (Source: Wikipedia)

The old computer-industry acronym is no longer a golden rule. The paradigm has shifted, and “bad” input data no longer always means bad results.

How to Work with “GI”

Obviously data has to have some consistency in order to work with it. While I was working with a colleague on a rule application the other week, we ran into a data problem: the data modeling shortcuts that our input data used—we had multiple strings of data stuffed into one text field—were going to make for a subsequently uncomfortable rule authoring experience. Luckily our garbage-in data strings were delimited, but the difficulties of authoring rules against this data would be notable. We wanted to be able to create our business logic independent of how the data entered our ruleapp.

To deal with this problem, we had to make a choice:

A) Normalize the incoming data model

B) Sprinkle data manipulation tricks throughout our rules

C) Use initial rules to shape and stage the data

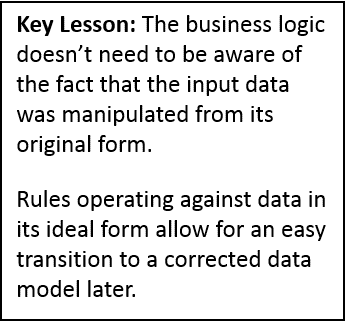

Since A) was impractical, and B) meant that we’d need to write difficult-to-understand (and maintain) rules, we chose C). Implementation of that initial decision saved us numerous headaches later. And we realized that when it all worked as planned, shaping our data would mean that we’d go from GIGO to GI does not always equal GO!

Or GI≠GO for short.

Data Shaping

Specifically, our ServiceCode field contained a variety of different strings of data. Sometimes it contained one string, but often it contained several strings. Thankfully, these ServiceCode data strings were always delimited by a plus sign (+). Our Garbage In turned out to be process friendly.

So we decided to break these strings apart and load the results of those strings into a brand new collection, extending our data model. Once the strings populated this collection, we would then be able to author rules against individual members without care for how the original data came into our ruleapp. A meaningful data structure would lead us to meaningful rules that were themselves free of distracting data manipulation techniques.

How We Did It

So we parsed our data strings using a UDF (user-defined function). If you’re not familiar with irAuthor’s UDF capabilities, this video from InRule’s support site does a good job explaining them. I’ve included a screen shot of our specific UDF, which takes an input variable ServiceCode, splits it into chunks where it finds a “+” in the string, and assigns those chunks to a Code field within a collection:

Smooth Sailing

After this data shaping exercise, our project became easy. We ran the UDF in rules to whittle down the ServiceCode field into its component output strings. And then we then ran a series of “contains” business language rules against each string to set values to other fields within our collection.

Once we had a collection of separated field which contained the previously combined ServiceCode information, we were able to write more meaningful conditional rules against those fields. Those subsequent rules came a lot closer to representing the actual business processes in our system.

Final Lessons

Garbage In (GI) often forces us to write awkward rules that are difficult to understand and maintain. Thankfully, the flexibility of a rules engine combined with the creativity of an experienced rule author can turn GI into much friendlier input. And friendlier input brings friendlier rules. Garbage In does not always require us to produce Garbage Out.

Thanks for reading. With further questions about UDFs, please investigate InRule online help. With any other feedback, please feel free to email the author.