We are always looking for ways we can do better.

At InRule, our goal is to make automation accessible. Rule authoring is an iterative process. As we build our business rules, we are always looking for ways to improve not only our accuracy but also the performance of our rule applications.

Fortunately, our integrated testing tool (irVerify) can help.

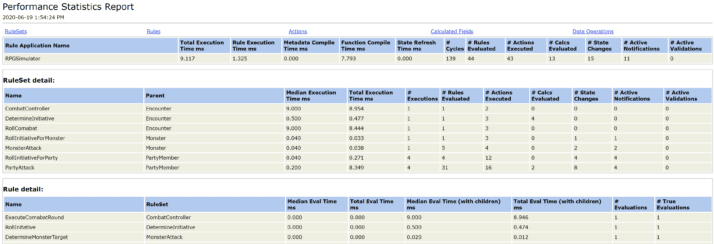

Performance Statistics Report

One of the best tools for analyzing performance is the Performance Statistics report. The Performance Statistics report provides a detailed, granular report on the timing details from your rule application.

As an example, let’s say I wanted to analyze a rule application to find ways to increase performance. In a previous blog post, as part of a rule modeling exercise, I created a rule application to automate a combat encounter for a table-top role-playing game. I will use this rule application to demonstrate using the Performance Statistics to enhance the rule application.

After I load test data and execute rules, I can run the Performance Statistics Report to get the granular detail for the execution of the rule application.

This report does not just provide detail at the ruleset level, but it also provides detail at the individual rule, calculation, and data element operations. This level of detail can provide something like a heat map to highlight areas of improvement and identify areas of the rule application that consume the most execution time.

Looking at my report, I can see that the Roll Combat (and specifically the PartyAttack ruleset) takes the longest to execute, so I will focus on that first.

Experiment with Different Techniques

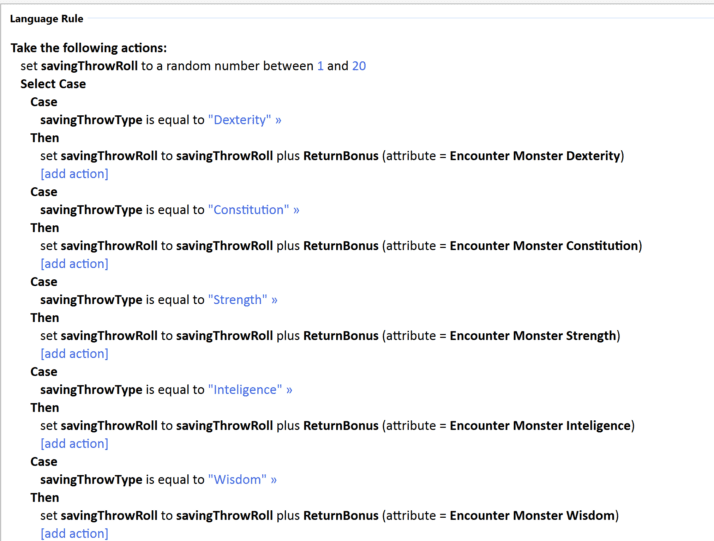

Upon closer inspection of the details, we can see that the ResolveSavingThrow rule executed by the PartyAttack is the child rule that is consuming the most time.

![]()

It does get evaluated multiple times and in looking more closely at the rule, I see that I used a select case to evaluate the possible conditions.

A potential alternative that may help performance would be to use a different construct to perform this evaluation, such as a decision table. But will it work?

There is only one way to tell and the band They Might Be Giants summarizes it best: “Put It to the Test”.

I can use the “Enabled” setting to disable the existing rule and create an alternative. For this example, I disabled the existing rule and replaced it with a single set value language rule and a simple decision table. Enabling and disabling rules will allow me to toggle from one model to another and test each technique.

Now, I can retest the rule each way to gauge whether there is any improvement. In the image below, I’ve included the test with the original construct.

It’s great to see an improvement, but how do I know that these two tests are truly representative?

Performance Test Suites

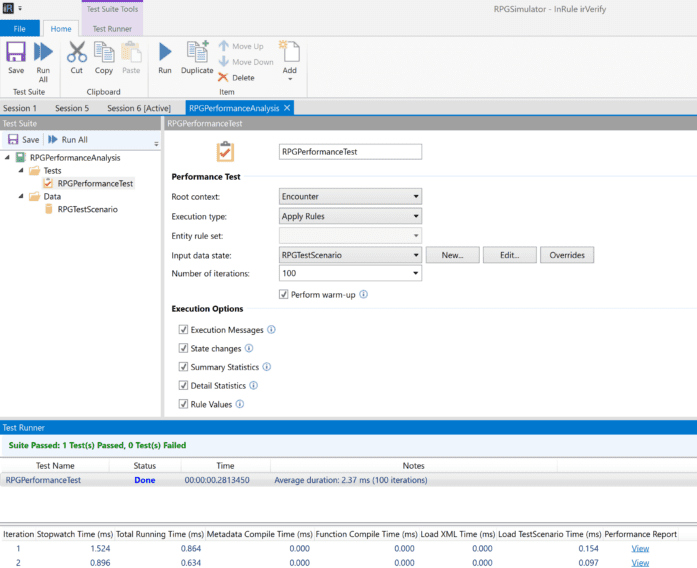

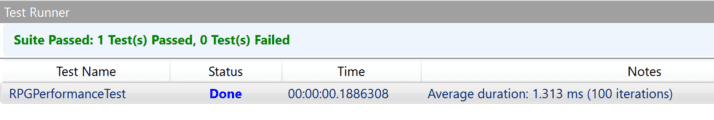

Since there are several factors that can alter the results of any single performance test, it is often better to look at the results in aggregate. I can use a performance test in irVerify’s test suite to provide more clarity by executing multiple iterations of a test and compiling the average across the multiple runs.

I can select how many iterations to execute, as well as how much detail to compile. It’s important to note: the performance test suite gives the user an option to perform a warm-up. This option will run two iterations before beginning the test to eliminate any .NET overhead that could skew the results.

From running the test suite with the new rules, I have an average duration of 1.313 ms versus our original model with the select case of 2.37 ms – a 44.6% improvement using the decision table over the select case technique.

While I improved an already fast execution time in this example, hopefully this illustrates how easy it is to use performance tests in irVerify to identify areas of improvement in your rule application.

Happy authoring – and testing!!!