Live Webinar: Risk and Ethical AI

In almost every survey about Artificial Intelligence (AI), business leaders are extremely optimistic about how this innovation can be used to enhance operations and grow the bottom line.

Whether it’s to find ways to improve the customer experience, speed up processes, mine Big Data, or the other potential benefits, it’s clear that forward-thinking companies have high hopes and expectations for how AI will transform the future of business.

However, as we’ve seen in the past, disruptive technology can also bring its own set of challenges. For example, machine learning dramatically enhances the power of decision engines by processing complex predictions with ease and speed, but what happens if things go off the rails?

What are the potential risks to businesses using it? What are the consequences? How would they even know if their AI is contributing to bad decision-making?

These concerns don’t mean that companies should avoid AI. It just means that they should engage with caution until initial concerns are addressed and the immediate pitfalls can be avoided. Specifically, with automated decisioning, businesses need to understand how and why predictions were made by machine learning for multiple reasons including to regulatory compliance and to reduce the chance for unwanted bias to enter into the decision process.

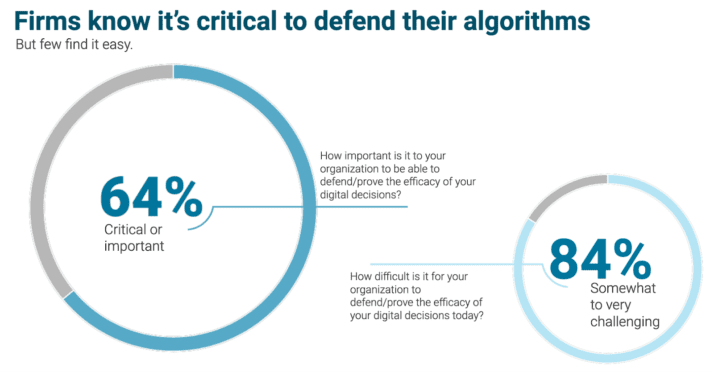

64% of business leaders know that they must be able to defend or prove the efficacy of their digital decisions, yet 84% find this a challenge.

Base: 302 US application development and delivery decision-makers with knowledge of business rule management tools. Source: A commissioned study conducted by Forrester Consulting on behalf of InRule, March 2021.

So, the question is, how does an organization best embrace these new technologies—and get the maximum benefit out of them—while also ensuring that the risks are mitigated?

Transparency in decision-making is a necessary component for the next era of decision engines—and that is one of the core philosophies driving our product roadmap here at InRule. Our explainable machine learning technology explains the “why” behind every prediction made, reducing bias from machine learning models for more accurate and effective outcomes.

This is Explainable AI and we’ve discovered that decision transparency gives business leaders the confidence to utilize machine learning and use it to benefit their companies rather than worry about the problems it could cause.

Register Now: Risk and Ethical AI. The Impact of Fairness Through Awareness on Bias

Webinar Presenters

Join us on December 8 to discuss these issues and more with InRule experts Theresa Benson and Danny Shayman.

In this webinar, you will learn:

- How and when your organization should utilize declarative or non-declarative AI in your digital transformation

- The global trends, proposed legislation, and more affecting utilization of AI in enterprise and how might they impact you

- How to avoid traditional AI black box models using InRule’s xAI Workbench, which turns artificial intelligence into actionable intelligence in a unique way

Webinar attendees will also see a live demo of our industry-leading bias detection technology before it’s generally available.

Sign up now to join this live event

Not sure if you will be able to make it? We still invite you to register! Webinar registrants will receive a link to the webinar recording to watch on-demand at their convenience.

AI is going to become a key component for every company, in every industry. Join our webinar to earn how to reap the rewards while avoiding the issues!